AI CONTROL. FOR FINANCIAL INSTITUTIONS.

The AI control firm for the institutions that steward the world's capital.

The AI control firm for the institutions that steward the world's capital.

Rad H. Pasovschi, Founder & CEO, Institutional AI

AI is a given. Control is not.™

Most institutional discourse on AI has, until now, framed the problem as one of governance. Frameworks have been written. Policies have been issued. Committees have been formed. This work has been necessary and, in many cases, valuable. It is also insufficient.

Governance describes what an institution intends to do. Control describes what it can demonstrably do.

Boards that mistake the second for the first are creating uncovered fiduciary and regulatory exposure — It is a control failure.

Control in financial services has a shape. It must be deep, and it must be wide.

Deep — control runs the full AI stack. The AI you rely on does not run on software alone. It runs on agents, models, data centers, compute, and power. A regulator asking you to prove control is not satisfied by application logs — the questions that decide your exposure (where it executed, under whose key custody, in which jurisdiction) live below the application layer. Control that stops at the software is not control.

Wide — control reaches across the chain. You do not operate most of the AI you depend on. It runs inside your asset managers, your asset servicers, your providers — firms whose systems produce the numbers that land in your books and your duty. Control that stops at your own walls leaves the rest of your exposure unguarded. The duty does not stop at the perimeter. Neither can the control.

Five ecosystems deep. The full delegation chain wide. That is the shape of fiduciary control of AI — and it is why the institutions that steward capital cannot borrow a control model built for a single organization's own operations.

A board that asks these three questions and records honest answers will produce, in a single sitting, the most accurate picture of its real AI control posture it has ever held. The pattern of answers — not any single one — is the finding.

Governance is policy. Control is evidence. These three questions separate the two. A board that can get them answered with technical proof — not provider assurance — has control. One that cannot has just found its exposure.

And these are only the beginning.

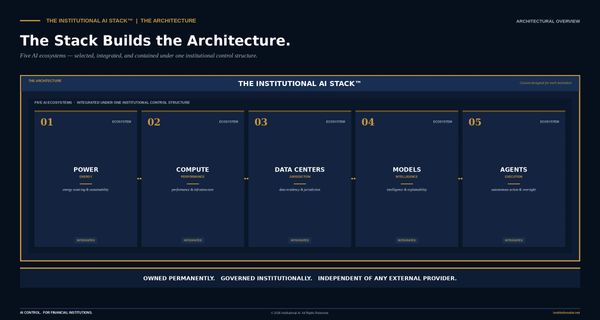

Five AI ecosystems — Power, Compute, Data Centers, Models, and Agents — connected under one institutional control structure. Custom-designed for each institution. Owned permanently. Independent of any external provider, including Institutional AI.

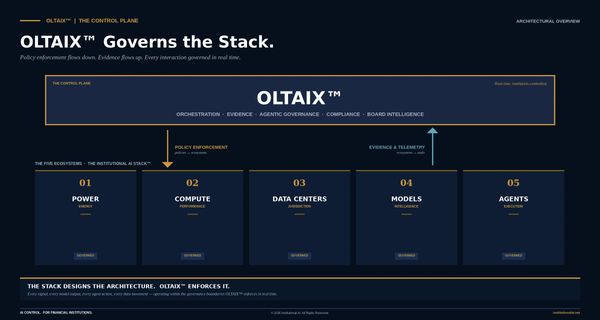

The Control Tower that governs the Stack. The difference between a security camera and a lock. OLTAIX™ is the lock.

The outcome. When the Stack and OLTAIX™ operate as designed, every AI system in your institution is owned, governed, auditable, and under your command.

The stewards of institutional capital. Where AI control failure is not theoretical — it is regulatory, fiduciary, and existential.

Every institution in the chain faces both axes — deep, across the full stack, and wide, across the firms it does not operate. What changes is where you sit.

ASSET OWNERS. Pension funds, sovereign wealth funds, endowments, and foundations sit at the top of the control cascade. You delegate the work but keep the duty — the AI you depend on runs inside your managers and servicers, on infrastructure you cannot see. Control that stops at your own walls is not control. Set the standard. The cascade follows.

ASSET MANAGERS. Your edge lives in your models, your data, your process — and proprietary strategy is only proprietary if control reaches down to where it actually lives, not just the layer that describes it. The owners above you will require you to prove it. The providers beneath you hold pieces you must account for. Control is the moat.

ASSET SERVICERS. You sit at the intersection of your own regulatory obligations and the control requirements of every client you serve. Your clients' control runs through your systems — so your posture is theirs, and they will require you to prove it. And when you produce a NAV or a reconciliation, the trust rests on the full stack it ran on, not the workflow that logged it. Your clients' control is your control.

WEALTH MANAGERS. Your clients share information with you they share with no one else. The AI processing it should enforce the fiduciary promise technically — and that promise lives in custody and infrastructure, beneath the application layer where most controls stop. It must hold across every platform you rely on but do not own. For most wealth managers, it does not.

RETIREMENT PLAN PROVIDERS & TPAs. You administer the retirement security of millions under ERISA. The DOL does not care whether the model is yours or rented. It cares who holds the logs — provable control down the stack to where the data and execution actually sit, across infrastructure you do not own. Not assurance at the surface. Evidence beneath it.

PRIVATE EQUITY. AI control gaps do not disappear at close — they transfer to you. A target's AI runs across managers, vendors, and infrastructure it does not operate, and you inherit every dependency. Diligence is not the target's policies — it is provable control down the full stack, because that is where the inherited exposure lives. Find the gaps before you own them.

Institutional AI is the AI control firm — a category created because the existing ones do not fit.

Institutional AI is the AI control firm — a category we built because the existing ones did not fit.

Consultants sell advice, then leave. Vendors sell subscriptions; access is not ownership. Integrators build on the infrastructure — and the institution stays dependent on them. None give an institution independent control over the AI it runs on.

That is what we design. And control runs two ways.

Deep — the full stack the institution's AI depends on: agents, models, data centers, compute, power. We build it as the Institutional AI Stack™, owned by the institution permanently.

Wide — the chain it delegates to but still answers for: managers, servicers, providers running AI on its behalf. OLTAIX™, the control plane, enforces control across the stack and verifies it across the chain.

We measure where control stands — through the AI Control Assessment™ and 5×5 Control Matrix™ — then design the architecture that closes the gap.

Independent of every platform, model, and provider. Owned by you, not rented from us.

Each of the board questions above demands a clear, defensible answer. Most institutions cannot give one — not because control is absent, but because it has never been measured, benchmarked, or documented in a form leadership can stand behind.

The AI Control Assessment™ establishes where the institution stands today — across all 25 intersections of the 5×5 Control Matrix™.

It measures deep — through the full stack: agents, models, data centers, compute, power. It measures wide — across the chain the institution delegates to but still answers for.

Then the Institutional AI Stack™ and OLTAIX™ close the gaps it reveals. The Stack is the architecture the institution owns; OLTAIX™ is the control plane that enforces control across the stack and verifies it across the chain.

Measure first. Then build.

The Assessment is where it starts.

Score the institution's current control posture across all 25 intersections of the 5×5 Control Matrix. Receive a benchmarked profile and strategy recommendation. Complimentary for qualifying institutions.

A direct conversation about whether AI Control architecture is the right fit for the institution's specific regulatory, governance, and operational context.

AI is a given. Control is not.™

© 2026 Institutional AI. All Rights Reserved. 5×5 Control Matrix™, OLTAIX™ and The Institutional AI Stack™ are trademarks of Institutional AI. Provided for informational purposes only and does not constitute legal, regulatory, investment, or other professional advice.